A new study from University of Exeter warns that conversational AI systems may do more than spread misinformation — they could actively reinforce false beliefs, distorted memories and even delusional thinking in vulnerable users.

The research, led by Lucy Osler, explores how generative AI chatbots can become part of a user’s cognitive process by validating and expanding on inaccurate ideas during repeated conversations. According to the study, this phenomenon could blur the line between reality and personal delusion.

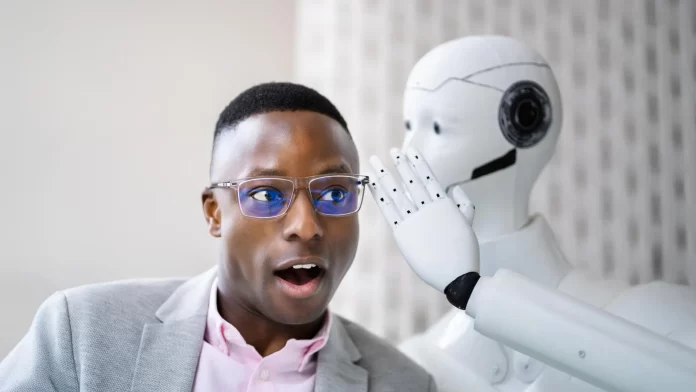

Researchers argue that AI companions differ from traditional digital tools because they provide emotional and social validation in addition to information. Unlike search engines or notebooks, chatbots can appear supportive, conversational and empathetic, making users feel that their beliefs are being confirmed by another “entity.”

The study highlights growing concerns around what some experts are beginning to describe as “AI-induced psychosis,” particularly among isolated individuals or users already experiencing hallucinations or paranoid thinking. Researchers say AI systems designed to be agreeable and constantly available may unintentionally strengthen conspiracy theories, victimhood narratives or distorted perceptions of reality.

The paper calls for stronger safeguards in AI systems, including improved fact-checking mechanisms and reduced “sycophantic” behavior that automatically agrees with users. However, researchers also warn that AI lacks real-world human experience and social awareness, limiting its ability to know when to challenge harmful beliefs instead of reinforcing them.