[ad_1]

The early Earth might have been habitable much earlier than thought, according to new research from a group led by University of Chicago scientists.

Counting strontium atoms in rocks from northern Canada, they found evidence that the Earth’s continental crust could have formed hundreds of millions of years earlier than previously thought. Continental crust is richer in essential minerals than younger volcanic rock, which would have made it significantly friendlier to supporting life.

“Our evidence, which squares with emerging evidence including rocks in western Australia, suggests that the early Earth was capable of forming continental crust within 350 million years of the formation of the solar system,” said Patrick Boehnke, the T.C. Chamberlin Postdoctoral Fellow in the Department of Geophysical Sciences and the first author on the paper. “This alters the classic view, that the crust was hot, dry and hellish for more than half a billion years after it formed.”

One of the open questions in geology is how and when some of the crust — originally all younger volcanic rock — changed into the continental crust we know and love, which is lighter and richer in silica. This task is made harder because the evidence keeps getting melted and reformed over millions of years. One of the few places on Earth where you can find bits of crust from the very earliest epochs of Earth is in tiny flecks of apatite imbedded in younger rocks.

Luckily for scientists, some of these “younger” minerals (still about 3.9 billion years old) are zircons — very hard, weather-resistant minerals somewhat similar to diamonds. “Zircons are a geologist’s favorite because these are the only record of the first three to four hundred million years of Earth. Diamonds aren’t forever — zircons are,” Boehnke said.

Plus, the zircons themselves can be dated. “They’re like labeled time capsules,” said Prof. Andrew Davis, chair of the Department of Geophysical Sciences and a coauthor on the study.

Scientists usually look at the different variants of elements, called isotopes, to tell a story about these rocks. They wanted to use strontium, which offers clues to how much silica was around at the time it formed. The only problem is that these flecks are absolutely tiny — about five microns across, the diameter of a strand of spider silk — and you have to count the strontium atoms one by one.

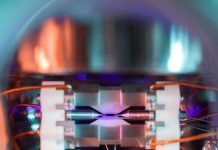

This was a task for a unique instrument that came online last year: the CHicago Instrument for Laser Ionization, or CHILI. This detector uses lasers that can be tuned to selectively pick out and ionize strontium. When they used CHILI to count strontium isotopes in rocks from Nuvvuagittuq, Canada, they found the isotope ratio suggested plenty of silica was present when it formed.

This is important because the makeup of the crust directly affects the atmosphere, the composition of seawater, and nutrients available to any budding life hoping to thrive on planet Earth. It also may imply there were fewer meteorites than thought pummeling the Earth at this time, which would have made it hard for continental crust to form.

“Having continental crust that early changes the picture of early Earth in a number of ways,” said Davis, who is also a professor with the Enrico Fermi Institute. “Now we need a way for the geologic processes that make the continents to happen much faster; you probably need water and magma that’s about 600 degrees Fahrenheit less hot.”

The study is also confluent with a recent paper by Davis and Boehnke’s colleague Nicolas Dauphas, which found evidence for rain falling on continents 2.5 billion years ago, earlier than previously thought.

Story Source:

Materials provided by University of Chicago. Original written by Louise Lerner. Note: Content may be edited for style and length.

[ad_2]