The UK’s data regulator has published a set of standards which it believes will force tech companies to take protecting children online seriously.

The Information Commissioner’s Office (ICO) today unveiled its age appropriate design code, which sets out 15 standards for online services.

It is aimed at companies responsible for designing, developing or providing online services such as social media, apps, online games and streaming platforms that will likely be accessed by children.

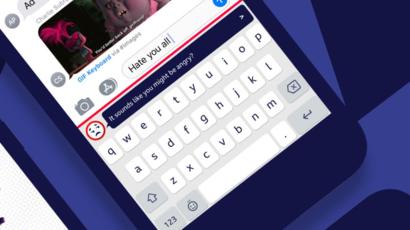

The new code will require digital services to provide an automatic base level of data protection for children when they download a new app or game or visit a website.

This means privacy settings should be set to high by default and alerts should not be used to encourage children to weaken their settings.

Location settings and profiling — which allows firms to serve up targeted content — should be switched off by default, while data collection and sharing should be minimised, the watchdog said.

“In an age when children learn how to use an iPad before they ride a bike, it is right that organisations designing and developing online services do so with the best interests of children in mind,” said information commissioner Elizabeth Dunham. “Children’s privacy must not be traded in the chase for profit.”

The code, which is the first of its kind, is rooted in the the General Data Protection Regulation (GDPR) rules rolled out in 2018.

It will be put before parliament for approval, after which companies will have 12 months to update their practices. The code is expected to come into full effect by autumn 2021.

Andy Burrows, head of child safety online policy at the NSPCC, said: “This transformative code will force high-risk social networks to finally take online harm seriously and they will suffer tough consequences if they fail to do so.