[ad_1]

Beliefs can be hard to change, even if they are scientifically wrong. But those on the fence about an idea can be swayed after hearing facts related to the misinformation, according to a study led by Princeton University.

After conducting an experimental study, the researchers found that listening to a speaker repeating a belief does, in fact, increase the believability of the statement, especially if the person somewhat believes it already. But for those who haven’t committed to particular beliefs, hearing correct information can override the myths.

For example, if a policymaker wants people to forget the inaccurate belief that “Reading in dim light can damage children’s eyes,” they could instead repeatedly say, “Children who spend less time outdoors are at greater risk to develop nearsightedness.” Those on the fence are more likely to remember the correct information and, more importantly, less likely to remember the misinformation, after repeatedly hearing the correct information. People with entrenched beliefs are likely not to be swayed either way.

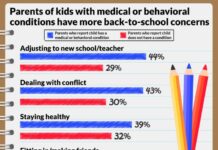

The sample was not nationally representative, so the researchers urge caution when extrapolating the findings to the general population, but they believe the findings would replicate on a larger scale. The findings, published in the academic journal Cognition, have the potential to guide interventions aimed at correcting misinformation in vulnerable communities.

“In today’s informational environment, where inaccurate information and beliefs are widespread, policymakers would be well served by learning strategies to prevent the entrenchment of these beliefs at a population level,” said study co-author Alin Coman, assistant professor of psychology at Princeton’s Woodrow Wilson School of Public and International Affairs and Department of Psychology.

Coman and Madalina Vlasceanu, a graduate student at Princeton, conducted a main study, with a final total of 58 participants, and a replication study, with 88 participants.

In the main study, a set of 24 statements was distributed to participants. These statements, which contained eight myths and 16 correct pieces of information in total, fell into four categories: nutrition, allergies, vision and health.

Myths were comprised of statements commonly endorsed by people as true, but that are actually false, such as “Crying helps babies’ lungs develop.” The correct and related piece of information would be: “Pneumonia is the prime cause of death in children.”

First, the participants were asked to carefully read these statements, which were described as statements “frequently encountered on the internet.” After reading, participants rated whether they believed the statement was true on a scale from one to seven (one being “not at all” to seven being “very much so.”) Next, they listened to an audio recording of a person remembering some of the beliefs the participants had read initially. In the recording, the speaker spoke naturally, as someone would recalling information. The listeners were asked to determine whether the speakers were accurately remembering the original content. Each participant listened to an audio recording containing two of the correct statements from each of two categories.

Participants were then given the category name — nutrition, allergies, vision, or health — and were instructed to recall the statements they first read. Finally, they were presented with the initial statements and asked to rate them based on accuracy and scientific support.

The researchers found that listeners do experience changes in their beliefs after listening to information shared by another person. In particular, the ease with which a belief comes to mind affects its believability.

If a belief was mentioned by the person in the audio, it was remembered better and believed more by the listener. If, however, a belief was from the same category as the mentioned belief (but not mentioned itself), it was more likely to be forgotten and believed less by the listener. These effects of forgetting and believing occur for both accurate and inaccurate beliefs.

The results are particularly meaningful for policymakers interested in having an impact at a community level, especially for health-relevant inaccurate beliefs. Coman and his collaborators are currently expanding upon this study, looking at 12-member groups where people are exchanging information in a lab-created social network.

[ad_2]